In my last Python post, I learned how to get a single webpage from one of my old blogs and convert it from HTML into Markdown. My objective, if you recall, is to take a list of posts from my old blog and convert them into an EPUB.

I chose this task mainly to give me a reasonable goal while learning python, but I’m also thinking about some of the practical uses for a script like this. For instance, say you had a list of webpages containing primary source transcriptions that you wanted your students to read. A script like the one I’m trying to write could conceivably be used to package all of those sources in a single PDF or EPUB file that could then be distributed to students. The popularity of plugins like Anthologize also indicate that there is a general interest in converting blogs into electronic books, but that plugin only works with WordPress. A python script could conceivably do this for any blog.

I’m quickly learning, however, that making this script portable will require quite a bit of tweaking. Which is another way of saying "quite a bit of geeky fun"!

Here’s the code I ended with in my last post:

# pandoc-webpage.py

# Requires: pyandoc http://pypi.python.org/pypi/pyandoc/

# (Change path to pandoc binary in core.py before installing package)

# TODO: Iterate over a list of webpages

# TODO: Clean up HTML by removing hard linebreaks

# TODO: Delete header and footer

import urllib2

import pandoc

# Open the desired webpage

url = 'http://mcdaniel.blogs.rice.edu/?p=158'

response = urllib2.urlopen(url)

webContent = response.read()

# Call on pandoc to convert webContent to markdown and write to file

doc = pandoc.Document()

doc.html = webContent

webConverted = doc.markdown

f = open('wendell-phillips.txt','w')

f.write(webConverted)

f.close()This short script accomplished a lot, but it also left me with a text file full of stuff that I don’t want in my final output. The urlopen line grabbed the entire webpage, including the blog’s title and all of the navigation links, but all I really want from each page of my blog is the main text, the post title, and the date it was originally published.

Fortunately, one of the blogs I follow (by George Mason grad student Jeri Wieringa) recently had a good working tutorial on Beautiful Soup, a Python library that helps you scrape only as much data from a webpage as you actually want.

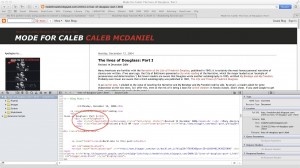

Beautiful Soup works by finding particular tags in an html file and then performing actions on those tags. To use it, I first had to go to my old blog (which was originally hosted on Blogger) and figure out what the underlying HTML on each page looks like. Most browsers make it easy to see what the HTML source of a webpage looks like; in Safari, you can go to the "Develop" menu and select "Show Page Source." This is what it looks like when I view the Page Source for this post on Frederick Douglass:

By studying the source, I noticed that the main body of my blog post was wrapped in a tag called <div class="blogPost">. So my next step was to see if I could use the Beautiful Soup tutorial and documentation to get only the text nested inside that tag, instead of the whole webpage with all of its sidebar links, comments, and images. After some fiddling and much trial and error, I got to this code:

soup = BeautifulSoup(webContent)

rawPost = soup.find("div", class_="blogPost")To check my results, I put a print rawPost line just after this last line and ran my script again; sure enough, the contents of the <div class="blogPost"> tag were printed to my Terminal. I also noticed, however, that I was still getting some content I didn’t want: namely, the contents of the <div class="byline"> tag. That’s because that tag is nested within the blogPost div. After some more fiddling, and with help from the tutorial and Google, I managed to delete this content like this:

byline = soup.find("div", class_="byline")

byline.decompose()Using a similar method, I isolated the title of the post (which happened to be inside the very first <h2> tag on the page) and the date of the post (which happened to be inside the very first <h3> tag). I assigned these strings to variables of their own, and then I started trying to pass my parsed results into Pandoc, just like I did with the whole webpage in my earlier example code.

At this point I ran into some major hiccups, and got a lot of error messages that were difficult to understand. Most of them contained words that I could enter into Google to get a rough idea of what was going on, and it seemed like the problem had to do with the encoding of the text that I was feeding into Pandoc. Pandoc requires input text to be in UTF-8 encoding. Fortunately, I learned that in Python you can force text into this encoding by using the str() function, like this:

post = str(rawPost)Once I solved that problem, most of the rest of the script was easy to put together using the lines I already had in my previous code. I did encounter one problem, though, which is an artifact either of the way I inputted my posts into Blogger originally, or the way that Blogger renders text. Instead of separating each paragraph into <p> tags, which is the well-formed-HTML way to do it, I found that many of my posts on Mode for Caleb have paragraphs separated by <br /> tags. The problem is that Pandoc interprets these tags literally, replacing them with a backslash character in the Markdown output. In a few tests I ran, I learned that this would cause problems down the road when I try to convert the markdown to EPUB. So I needed to get rid of the backslash characters. Drawing partly on one of the scripts Chad showed us last week, I did this using replace before writing the full text to a file.

Here’s what the finished code for this step looks like:

# pandoc-webpage.py

# Requires: pyandoc http://pypi.python.org/pypi/pyandoc/

# (Change path to pandoc binary in core.py before installing package)

# TODO: Iterate over a list of webpages

# TODO: Preserve span formatting from original webpage

import urllib2

import pandoc

from bs4 import BeautifulSoup

# Open the desired webpage

url = "http://modeforcaleb.blogspot.com/2005/08/first-twenty-minutes.html"

response = urllib2.urlopen(url)

webContent = response.read()

# Prepare the downloaded webContent for parsing with Beautiful Soup

soup = BeautifulSoup(webContent)

# Get the title of the post

rawTitle = soup.h2

title = str(rawTitle)

# Get the date from the post

rawDate = "Originally posted on " + soup.h3.string

date = str(rawDate)

# Get rid of the byline div in the post

byline = soup.find("div", class_="byline")

byline.decompose()

# Identify the blogPost section, which should now lack the byline

rawPost = soup.find("div", class_="blogPost")

# Had a lot of problems until I converted rawPost into string, which makes UTF-8

post = str(rawPost)

# Combine the title, date and post body

fulltext = title + date + post

# Call on pandoc to convert fulltext to markdown and write to file

doc = pandoc.Document()

doc.html = fulltext

webConverted = doc.markdown

# Write to file, getting rid of any literal linebreaks

f = open('oldpost.txt','w')

f.write(webConverted.replace("\\\n","\n"))

f.close()You can compare this with my old code to see what’s changed. So far things are proceeding well, but I’ve got a long way to go before I can turn a bunch of posts into an EPUB. For one thing, the linebreak problem I mentioned above is only the first of several bad HTML practices still lurking in the Blogger pages I want to convert. For example, sometimes italicized words are bracketed by <i> tags, and sometimes they are bracketed by <span> tags, which Pandoc doesn’t recognize. Luckily, I now know how to use Beautiful Soup to modify HTML tags and manipulate HTML in Python, so I’m beginning to see how the whole script will end up.

I realize, by the way, that this entire posts assumes some working familiarity with HTML—if you’re interested in what I’m doing here, but don’t know much about HTML, let me know and I can help point you to some resources for getting started.

2 Responses to Learning Python, Part II